Introduction to Open Source Software

Jonathan Byrne

Urban Modelling Group

University College Dublin

Ireland

Overview

- The origins of unix, linux and open source

- Taco bell programming

- Version control

- Profiling

- Parallelising your code

Why learn unix?

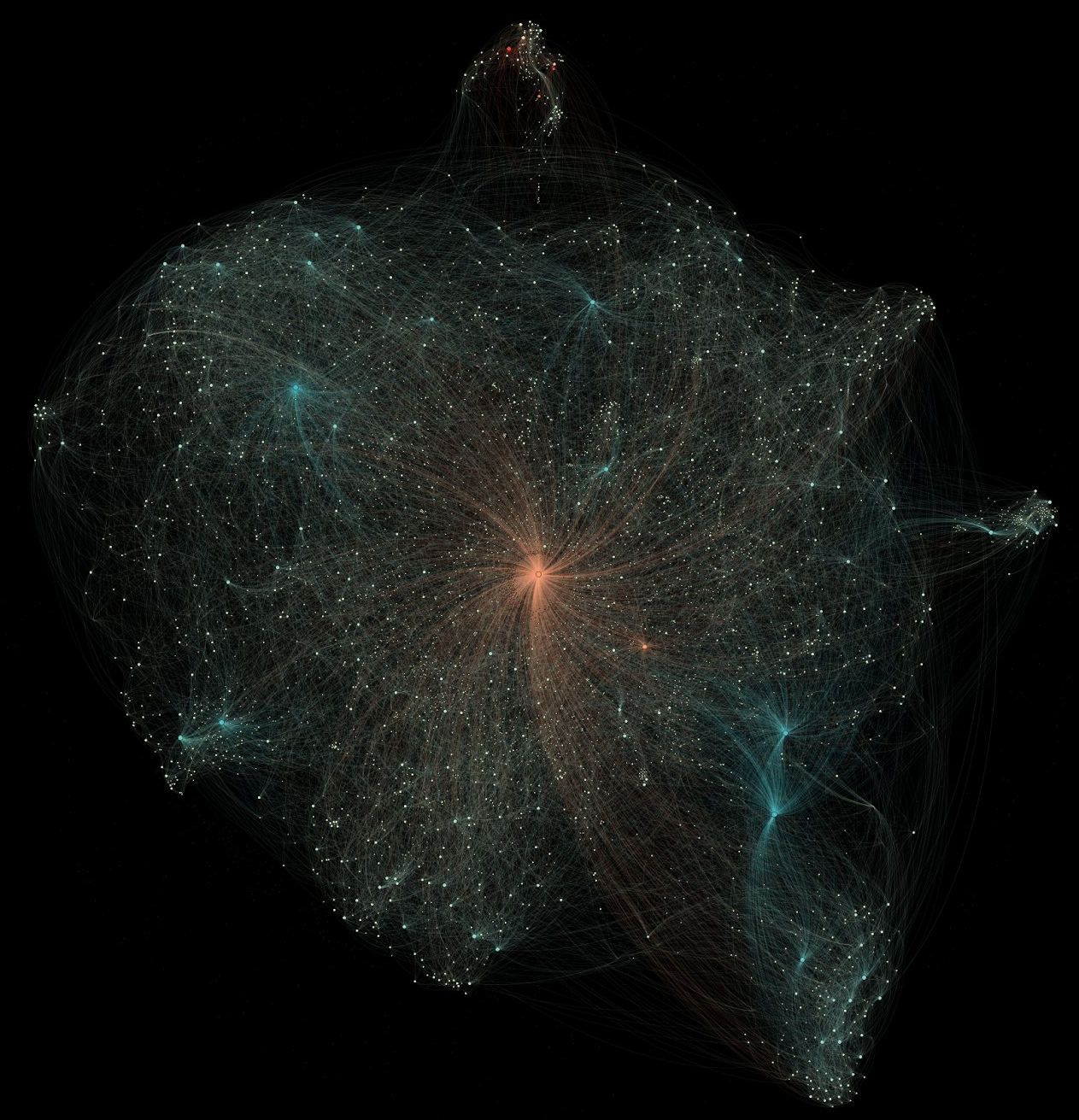

The open source universe

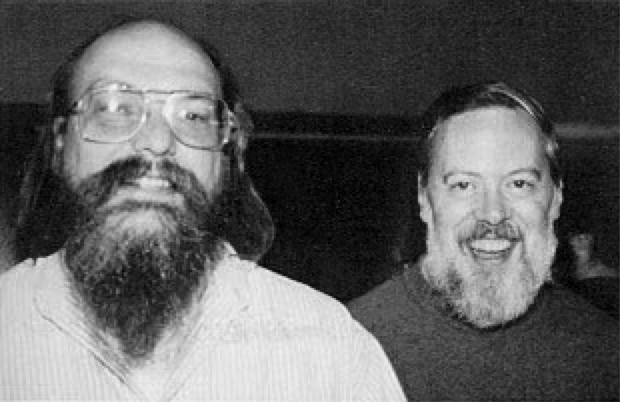

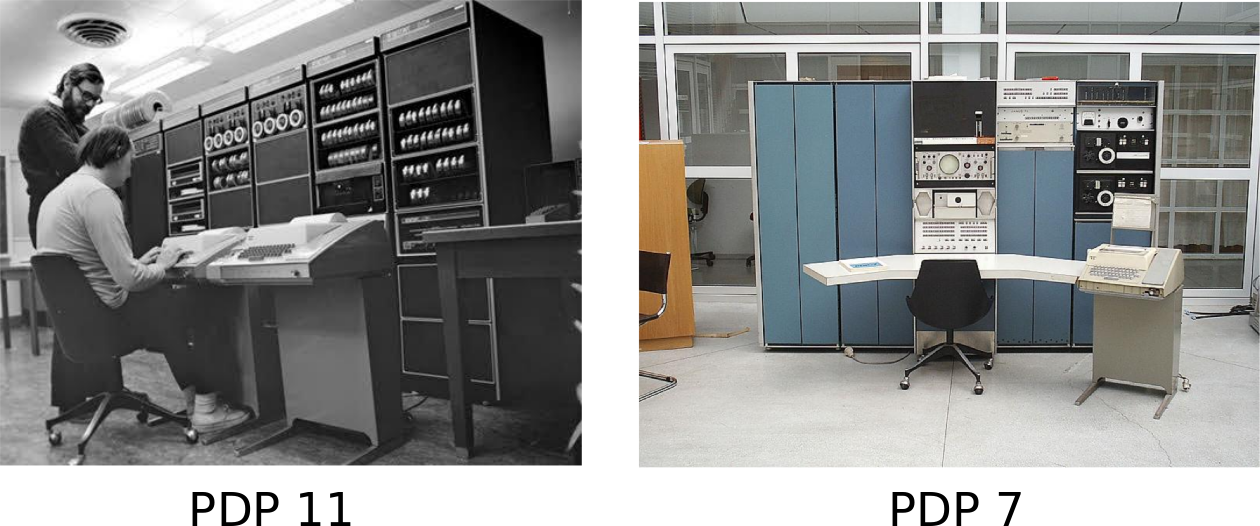

In the beginning

Our collaboration has been a thing of beauty. In the ten years that we have worked together, I can recall only one case of miscoordination of work. On that occasion, I discovered that we both had written the same 20-line assembly language program. I compared the sources and was astounded to find that they matched character-for-character. The result of our work together has been far greater than the work that we each contributed.

It began with a game

The unix philosophy

- Write programs that do one thing and do it well

- Write programs to work together

- Write programs to handle text

- Keep it simple stupid

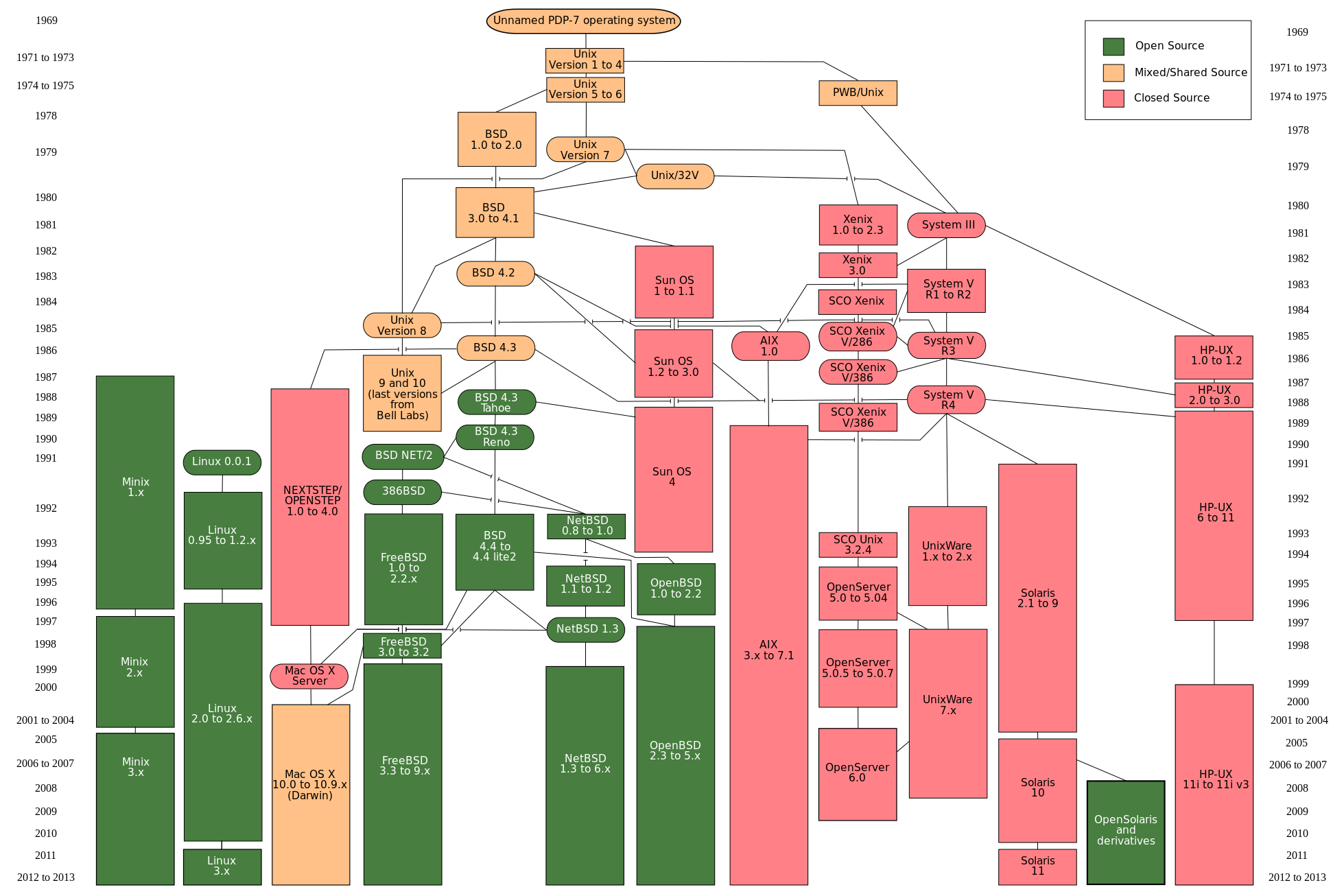

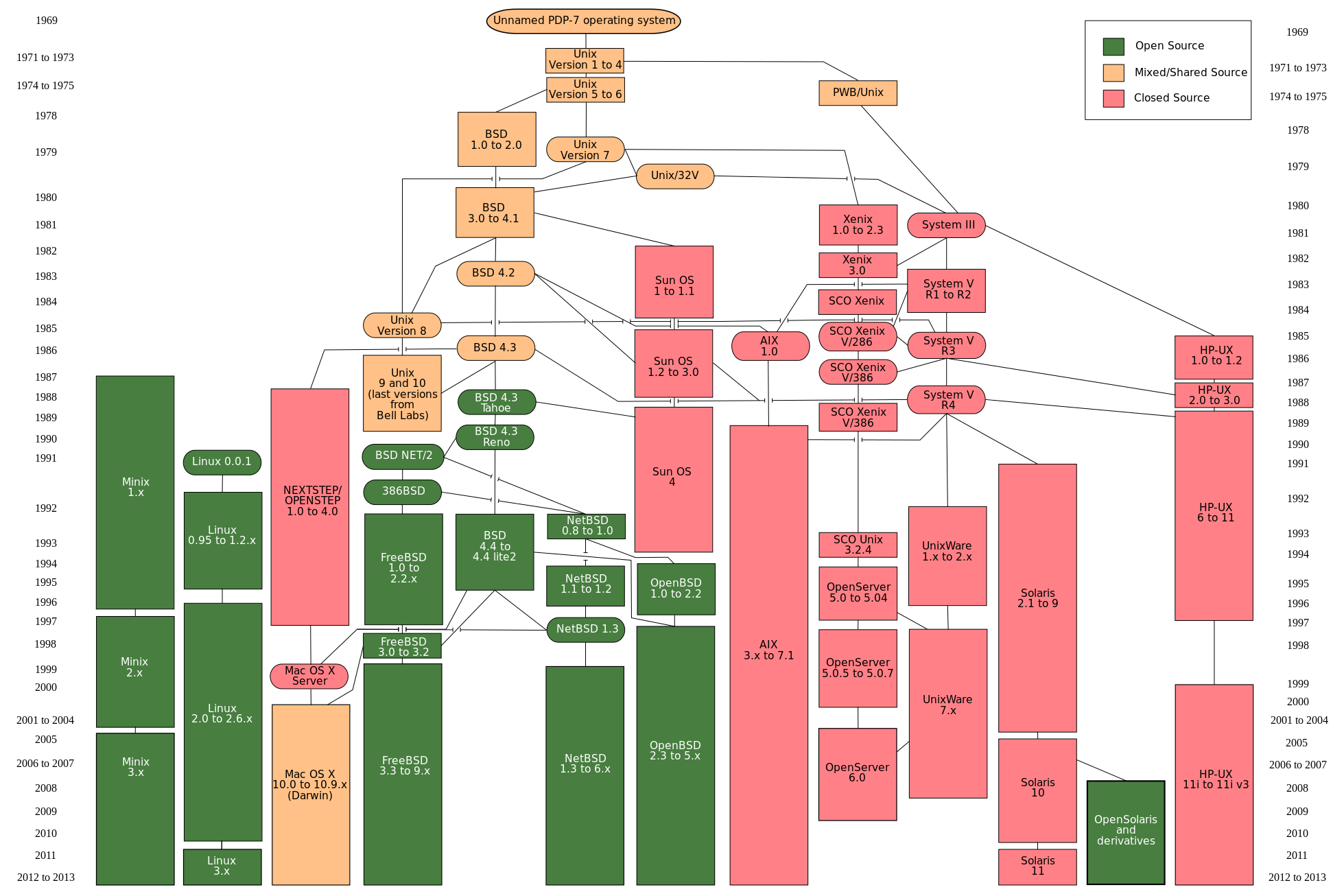

Unix family tree

The GNU project

- Think free as in free speech, not free beer

- Free to use, copy, change and rewrite

- Copyleft: If you copy, modify or include it, you have to give it away

Software licenses

- GNU Public License (GPL)

- GNU Lesser Public License (LGPL)

- Berkley Software Distribution (BSD) License

Unix family tree

Linux

I’m doing a (free) operating system (just a hobby, won’t be big and professional like gnu) for 386(486) AT clones. This has been brewing since april, and is starting to get ready.

Linus Torvalds 25 August 1991

Linux

- "Software is like sex: it's better when it's free."

- Built using GNU toolchain (GCC, Bash shell)

- Debian, Ubuntu, Redhat, Fedora, SUSE, etc

- 96% of super computers run on linux

- Stallman calls it GNU/Linux

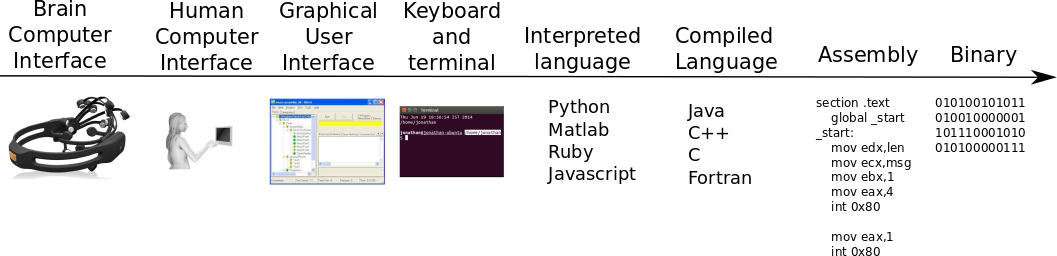

Programming 101

- Be actively lazy

- Don't code, glue

- It begins and ends with the command line

The sweet spot

Taco bell programming

- Do not overthink things

- Use pre-existing basic tools

- Functionality is an asset but code is a liability

The task

- Write a webscraper that pulls down a million webpages

- Search all pages for references of a given phrase

- Parallelise the code to run on a 32 core machine

The solution

- EC2 elastic compute cloud?

- Hadoop nosql database?

- SQS ZeroMQ?

- Parallelising using openMPI or mapreduce?

The ingredients

- cat

- wget

- grep

- xargs

Download a million webpages

cat webpages.txt | xargs wget

Search for references to a given phrase...

grep -l reddit *.*

...and if found then do further processing

grep -l reddit *.* | xargs -n1 ./process.sh

parallelise to run on 32 cores

grep -l reddit *.* | xargs -n1 -P32 ./process.sh

Turning it into a program

#!/bin/bash

cat webpages.txt | xargs wget -P pages

grep -l reddit pages/*.* | xargs -n1 -P32 ./process.sh

Command line interfaces:

- Use cloudcompare to subsample cloud

- Use PCL to remove statistical outliers

- Use meshlab to compute normals and do poisson reconstruction

Turning it into a program

pcl_statistical_outlier centralsmall.asc -n10 -stddev 2

Cloudcompare centralsmall.asc -O subsample.asc -SS SPATIAL 0.1

meshlabserver -i ./subsample.asc -o ./meshed.ply -s poisson.mlx

Version Control

But I don't need it!

- Because you in the past hates you in the now

- final2014revisedb2bbb.zip

- No, dropbox will not do

- Software: Subversion / GIT / Mercurial

- Websites: Sourceforge / Github / Bitbucket

Look at it grow

link

But wait there's more!

link

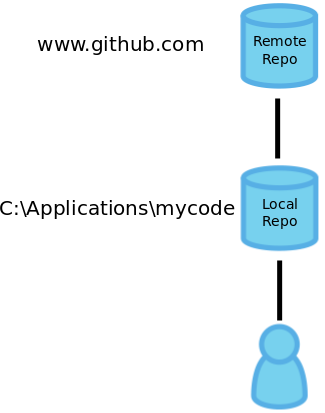

How does it work?

Setting it up

git clone https://github.com/cloudcompare/trunk.gitSaving changes

git commit -a -m "fixed a nasty bug in pdf writer"git pushGetting updates

git pullCollaboration makes the code grow stronger

githubOptimising your code

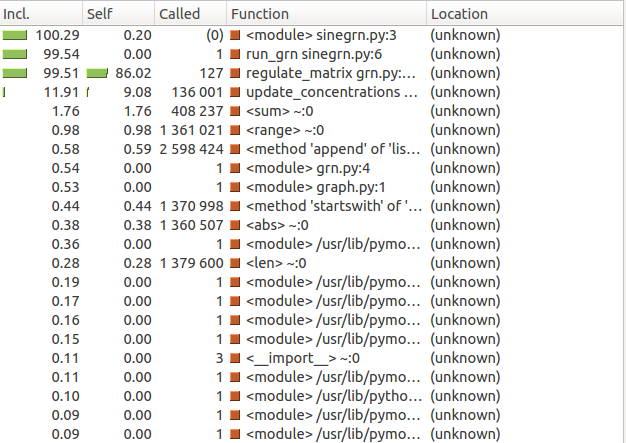

Profiling

- You cannot compile with your eyes

- Profiler is only way to understand your code

- Causes code to run veeeery slowly

- Use timestamps if you are lazy

Profilers

- matlab: profile()

- Java: yourkit

- Python: cProfile module

- C++: gprof

Profiling example

Testing

- You should be testing your code

- Eyeballing is a start

- Do not ignore misbehaving code

- Have a baseline to test against

- Unit tests

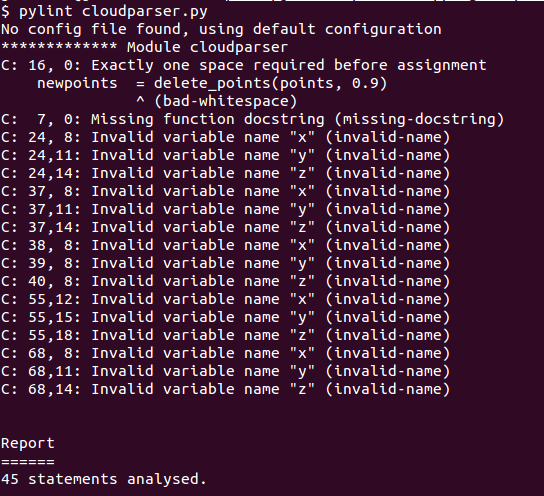

- Static code analysis

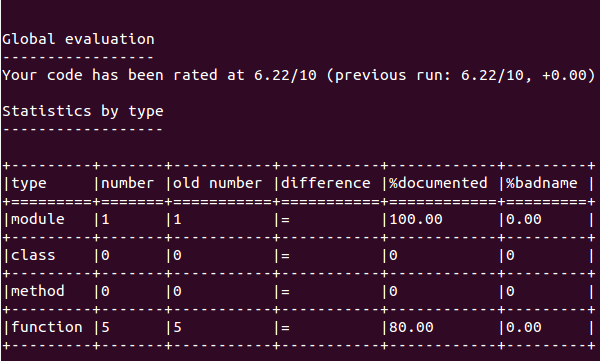

Pylint

Incentives

Static code analysis

Parallelising your code

Problems fall into two categories:

- Pleasingly parallel

- Disconcertingly serial

Pleasingly parallel

- Rendering frames in computer animation

- Functional programming (map/reduce)

- Simulations for independent scenarios

- Genetic algorithms

Disconcertingly serial

- State based calculations

- Writing to a single resource (I/O bound problems)

- Single global weather simulation

Amdahl's law

the effort expended on achieving high parallel processing rates is wasted unless it is accompanied by achievements in sequential processing rates of very nearly the same magnitude.

Example: rendering an animation

- parallelise a single frame

- parallelise a sequence of frames

- parallelise the scenes

How to program

- Write small programs

- Glue together existing code

- Use version control

- If your code is slow, profile it

- Test your code

- Know at least 1 command line editor: VI, Emacs, nano

- Do not ignore misbehaving code

- Every time you use your mouse you have failed as a programmer

Command line tips

- man

- use tab to autocomplete

- keep a record of your commands

Setting up an alias:

- vi ~/.bashrc

- alias cmd="vi ~/Documents/commands.txt"

sudo

sudo aptitude install libpcl_allCommand line tutorial

- ls

- cd

- mkdir

- touch

- aptitude search

- aptitude install

The only book you will ever need:

"C is not a big language, and it is not well served by a big book"